[seq2seq + Attention] 불어-영어 번역 모델 PyTorch로 구현하기

2020. 2. 10. 15:25ㆍnlp

반응형

https://pytorch.org/tutorials/intermediate/seq2seq_translation_tutorial.html

NLP From Scratch: Translation with a Sequence to Sequence Network and Attention — PyTorch Tutorials 1.4.0 documentation

Note Click here to download the full example code NLP From Scratch: Translation with a Sequence to Sequence Network and Attention Author: Sean Robertson This is the third and final tutorial on doing “NLP From Scratch”, where we write our own classes and fu

pytorch.org

(전처리 과정 생략)

같은 의미의 프랑스어-영어 pair로 이루어진 데이터 이용

from __future__ import unicode_literals, print_function, division

from io import open

import unicodedata

import string

import re

import random

import torch

import torch.nn as nn

from torch import optim

import torch.nn.functional as F

1) Encoder

class EncoderRNN(nn.Module):

def __init__(self, input_size, hidden_size):

super(EncoderRNN, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(input_size, hidden_size)

self.gru = nn.GRU(hidden_size, hidden_size)

def forward(self, input, hidden):

embedded = self.embedding(input).view(1,1,-1)

output = embedded

# gru에 입력되는 건 모두 (1,1,*) shape이어야 함?

output, hidden = self.gru(output, hidden)

return output, hidden

def initHidden(self):

# 첫 은닉상태는 모두 0으로 이루어진 텐서로 초기화

return torch.zeros(1,1, self.hidden_size)

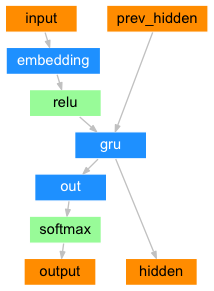

2) Simple Decoder

class DecoderRNN(nn.Module):

def __init__(self, hidden_size, output_size):

super(DecoderRNN, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(output_size, hidden_size)

self.gru = nn.GRU(hidden_size, hidden_size)

self.out = nn.Linear(hidden_size, output_size)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden):

output = self.embedding(input).view(1,1,-1)

output = F.relu(output)

output, hidden = self.gru(output, hidden)

output = self.softmax(self,out(output[0]))

return output, hidden

def initHidden(self):

return torch.zeros(1,1, self.hidden_size)

3) Attention Decoder

class AttnDecoderRNN(nn.Module):

def __init__(self, hidden_size, output_size, dropout_p=0.1, max_length=MAX_LENGTH):

super(AttnDecoderRNN, self).__init__()

self.hidden_size = hidden_size

self.output_size = output_size

self.dropout_p = dropout_p

self.max_length = max_length

self.embedding = nn.Embedding(self.output_size, self.hidden_size)

self.attn = nn.Linear(self.hidden_size*2, self.max_length)

self.attn_combine = nn.Linear(self.hidden_size*2, self.hidden_size)

self.dropout = nn.Dropout(self.dropout_p)

self.gru = nn.GRU(self.hidden_size, self.hidden_size)

self.out = nn.Linear(self.hidden_size, self.output_size)

def forward(self, input, hidden, encoder_outputs):

embedded = self.embedding(input).view(1,1,-1)

embedded = self.dropout(embedded)

# torch.cat : concatenate

attn_weights = F.softmax(self.attn(torch.cat((embedded[0], hidden[0]), 1)), dim=1)

# torch.bmm : matmul (행렬 곱)

# 원래 tensor 크기가 (3) 이었다면 unsqueeze(0) 후에는 (1,3)

# 원래 tensor 크기가 (3) 이었다면 unsqueeze(1) 후에는 (3,1)

# unqueeze는 차원에 1을 더해주는 것, 0/1은 axis=0(행)/axis=1(열) 의미

attn_applied = torch.bmm(attn_weights.unsqueeze(0), encoder_outputs.unsqueeze(0))

output = torch.cat((embedded[0], attn_applied[0]), 1)

output = self.attn_combine(output).unsqueeze(0)

output = F.relu(output)

output, hidden = self.gru(output, hidden)

output = F.log_softmax(self.out(output[0]), dim=1)

return output, hidden, attn_weights

def initHidden(self):

return torch.zeros(1,1, self.hidden_size)

Train Model

teacher_forcing_ratio = 0.5

def train(input_tensor, target_tensor, encoder, decoder, encoder_optimizer, decoder_optimizer, criterion, max_length=MAX_LENGTH):

encoder_hidden = encoder.initHidden()

encoder_optimizer.zero_grad()

decoder_optimizer.zero_grad()

input_length = input_tensor.size(0)

target_length = target_tensor.size(0)

encoder_outputs = torch.zeros(max_length, encoder.hidden_size, device=device)

loss = 0

for ei in range(input_length):

encoder_output, encoder_hidden = encoder(

input_tensor[ei], encoder_hidden)

encoder_outputs[ei] = encoder_output[0, 0]

decoder_input = torch.tensor([[SOS_token]], device=device)

decoder_hidden = encoder_hidden

use_teacher_forcing = True if random.random() < teacher_forcing_ratio else False

if use_teacher_forcing:

# Teacher forcing: Feed the target as the next input

for di in range(target_length):

decoder_output, decoder_hidden, decoder_attention = decoder(

decoder_input, decoder_hidden, encoder_outputs)

loss += criterion(decoder_output, target_tensor[di])

decoder_input = target_tensor[di] # Teacher forcing

else:

# Without teacher forcing: use its own predictions as the next input

for di in range(target_length):

decoder_output, decoder_hidden, decoder_attention = decoder(

decoder_input, decoder_hidden, encoder_outputs)

topv, topi = decoder_output.topk(1)

decoder_input = topi.squeeze().detach() # detach from history as input

loss += criterion(decoder_output, target_tensor[di])

if decoder_input.item() == EOS_token:

break

loss.backward()

encoder_optimizer.step()

decoder_optimizer.step()

return loss.item() / target_length

Evaluate Model

def evaluate(encoder, decoder, sentence, max_length=MAX_LENGTH):

with torch.no_grad():

input_tensor = tensorFromSentence(input_lang, sentence)

input_length = input_tensor.size()[0]

encoder_hidden = encoder.initHidden()

encoder_outputs = torch.zeros(max_length, encoder.hidden_size, device=device)

for ei in range(input_length):

encoder_output, encoder_hidden = encoder(input_tensor[ei],

encoder_hidden)

encoder_outputs[ei] += encoder_output[0, 0]

decoder_input = torch.tensor([[SOS_token]], device=device) # SOS

decoder_hidden = encoder_hidden

decoded_words = []

decoder_attentions = torch.zeros(max_length, max_length)

for di in range(max_length):

decoder_output, decoder_hidden, decoder_attention = decoder(

decoder_input, decoder_hidden, encoder_outputs)

decoder_attentions[di] = decoder_attention.data

topv, topi = decoder_output.data.topk(1)

if topi.item() == EOS_token:

decoded_words.append('<EOS>')

break

else:

decoded_words.append(output_lang.index2word[topi.item()])

decoder_input = topi.squeeze().detach()

return decoded_words, decoder_attentions[:di + 1]반응형

'nlp' 카테고리의 다른 글

| Seq2seq pay Attention to Self Attention Part 1-2 번역 및 정리 (0) | 2020.02.10 |

|---|---|

| 그림으로 보는 Transformer 번역 및 정리 (0) | 2020.02.10 |

| Attention Model 번역 및 정리 (0) | 2020.02.10 |

| seq2seq 모델 PyTorch로 구현하기 번역 및 정리 (9) | 2020.02.09 |

| Intro to Encoder-Decoder LSTM(=seq2seq) 번역 및 정리 (2) | 2020.02.08 |